Oil & Gas Investor: Batteries Cannot Save the Grid or the Planet

A Special Report excerpted from Oil & Gas Investor, November 2019

Article by Mark P. Mills

Illustration by Stefano Morri

The dream of a battery-centric energy supply is seductive, but the reality is that proponents of such a transformation misunderstand the capabilities and limitations of battery technology.

Batteries are a central feature of “new energy economy” aspirations. It would indeed revolutionize the world to find a technology that could store electricity as effectively and cheaply as, say, oil in a barrel, or natural gas in an underground cavern. Such electricity-storage hardware would render it unnecessary even to build domestic power plants. One could imagine an OKEC (Organization of Kilowatt-Hour Exporting Countries) that shipped barrels of electrons around the world from nations where the cost to fill those “barrels” was lowest; solar arrays in the Sahara, or coal mines in Mongolia (out of reach of Western regulators), or the great rivers of Brazil.

But in the universe that we live in, the cost to store energy in grid-scale batteries is about 200-fold more than the cost to store natural gas to generate electricity when it’s needed. That’s why we store, at any given time, months’ worth of national energy supply in the form of natural gas or oil.

Battery storage is quite another matter. Consider Tesla, the world’s best-known battery maker: $200,000 worth of Tesla batteries, which collectively weigh over 20,000 pounds, are needed to store the energy equivalent of one barrel of oil. A barrel of oil, meanwhile, weighs 300 pounds and can be stored in a $20 tank. Those are the realities of today’s lithium batteries. Even a 200% improvement in under lying battery economics and technology won’t close such a gap.

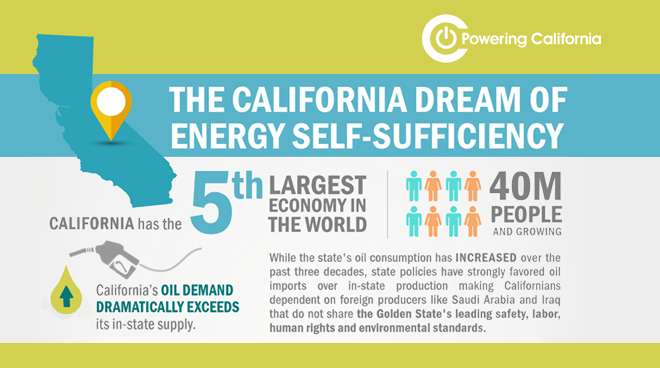

Nonetheless, policymakers in America and Europe enthusiastically embrace programs and subsidies to vastly expand the production and use of batteries at grid scale. Astonishing quantities of batteries will be needed to keep country-level grids energized—and the level of mining required for the underlying raw materials would be epic. For the U.S., at least, given

where the materials are mined and where batteries are made, imports would increase radically. Perspective on each of these realities follows.

How many batteries would it take to light the nation?

A grid based entirely on wind and solar necessitates going beyond preparation for the normal daily variability of wind and sun; it also means preparation for the frequency and duration of periods when there would be not only far less wind and sunlight combined but also for periods when there would be none of either. While uncommon, such a combined event—daytime continental cloud cover with no significant wind anywhere, or nighttime with no wind—has occurred more than a dozen times over the past century—effectively, once every decade. On these occasions, a combined wind/solar grid would not be able to produce a tiny fraction of the nation’s electricity needs. There have also been frequent one-hour periods when 90% of the national electric supply would have disappeared.

So how many batteries would be needed to store, say, not two months’ but two days’ worth of the nation’s electricity? The $5 billion Tesla “Gigafactory” in Nevada is currently the world’s biggest battery manufacturing facility. Its total annual production could store three minutes’ worth of annual U.S. electricity demand. Thus, in order to fabricate a quantity of batteries to store two days’ worth of U.S. electricity demand would require 1,000 years of Gigafactory production.

Wind/solar advocates propose to minimize battery usage with enormously long transmission lines on the observation that it is always windy or sunny somewhere. While theoretically feasible (and not always true, even at country-level geographies), the length of transmission needed to reach somewhere “always” sunny/windy also entails substantial reliability and security challenges. (And long-distance transport of energy by wire is twice as expensive as by pipeline.)

Building massive quantities of batteries would have epic implications for mining

A key rationale for the pursuit of a new energy economy is to reduce environmental externalities from the use of hydrocarbons. While the focus these days is mainly on the putative long-term effects of carbon dioxide, all forms of energy production entail various unregulated externalities inherent in extracting, moving and processing minerals and materials.

Radically increasing battery production will dramatically affect mining, as well as the energy used to access, process and move minerals and the energy needed for the battery fabrication process itself. About 60 pounds of batteries are needed to store the energy equivalent to that in 1 pound of hydrocarbons. Meanwhile, 50 to 100 pounds of various materials are mined, moved and processed for 1 pound of battery produced. Such underlying realities translate into enormous quantities of minerals—such as lithium, copper, nickel, graphite, rare earths and cobalt—that would need to be extracted from the earth to fabricate batteries for grids and cars. A battery-centric future means a world mining gigatons more materials. And this says nothing about the gigatons of materials needed to fabricate wind turbines and solar arrays, too.

Even without a new energy economy, the mining required to make batteries will soon dominate the production of many minerals. Lithium battery production today already accounts for about 40% and 25%, respectively, of all lithium and cobalt mining. In an all-battery future, global mining would have to expand by more than 200% for copper, by at least 500% for minerals like lithium, graphite and rare earths, and far more than that for cobalt. Then there are the hydrocarbons and electricity needed to undertake all the mining activities and to fabricate the batteries themselves. In rough terms, it requires the energy equivalent of about 100 barrels of oil to fabricate a quantity of batteries that can store a single barrel of oil-equivalent energy.

Radically increasing battery production will dramatically affect mining, as well as the energy used to access, process, and move minerals and the energy needed for the battery fabrication process itself. About 60 pounds of batteries are needed to store the energy equivalent to that in 1 pound of hydrocarbons. Meanwhile, 50 to 100 pounds of various materials are mined, moved and processed for 1 pound of battery produced.

Given the regulatory hostility to mining on the U.S. continent, a battery-centric energy future virtually guarantees more mining elsewhere and rising import dependencies for America. Most of the relevant mines in the world are in Chile, Argentina, Australia, Russia, the Congo and China. Notably, the Democratic Republic of Congo produces 70% of global cobalt, and China refines 40% of that output for the world.

China already dominates global battery manufacturing and is on track to supply nearly two-thirds of all production by 2020. The relevance for the new energy economy vision: 70% of China’s grid is fueled by coal today and will still be at 50% in 2040. This means that, over the life span of the batteries, there would be more carbon-dioxide emissions associated with manufacturing them than would be offset by using those batteries to, say, replace internal combustion engines.

Transforming personal transportation from hydrocarbon-burning to battery-propelled vehicles is another central pillar of the “new energy economy.” Electric vehicles (EVs) are expected not only to replace petroleum on the roads but also to serve as backup storage for the electric grid.

Lithium batteries have finally enabled EVs to become reasonably practical. Tesla, which now sells more cars in the top price category in America than does Mercedes-Benz, has inspired a rush of the world’s manufacturers to produce appealing battery-powered vehicles. This has emboldened bureaucratic aspirations for outright bans on the sale of internal combustion engines, notably in Germany, France, England and, unsurprisingly, California.

Such a ban is not easy to imagine. Optimists forecast that the number of EVs in the world will rise from today’s nearly 4- to 400 million in two decades. A world with 400 million EVs by 2040 would decrease global oil demand by barely 6%. This sounds counterintuitive, but the numbers are straightforward. There are about 1 billion automobiles today, and they use about 30% of the world’s oil. (Heavy trucks, aviation, petrochemicals, heat, etc., use the rest.) By 2040, there would be an estimated 2 billion cars in the world. Four hundred million EVs would amount to 20% of all the cars on the road—which would thus replace about 6% of petroleum demand.

In any event, batteries don’t represent a revolution in personal mobility equivalent to, say, going from the horse-and-buggy to the car—an analogy that has been invoked. Driving an EV is more analogous to changing what horses are fed and importing the new fodder.

The challenge in storing and processing information using the smallest possible amount of energy is distinct from the challenge of producing energy, or of moving or reshaping physical objects. The two domains entail different laws of physics.

Moore’s Law misapplied

Faced with all the realities outlined above regarding green technologies, new energy economy enthusiasts nevertheless believe that true breakthroughs are yet to come and are even inevitable. That’s because, so it is claimed, energy tech will follow the same trajectory as that seen in recent decades with computing and communications. The world will yet see the equivalent of an Amazon or “Apple of clean energy.”

This idea is seductive because of the astounding advances in silicon technologies that so few forecasters anticipated decades ago. It is an idea that renders moot any cautions that wind/solar/batteries are too expensive today—such caution is seen as foolish and shortsighted, analogous to asserting, circa 1980, that the average citizen would never be able to afford a computer. Or saying, in 1984 (the year that the world’s first cell phone was released), that a billion people would own a cell phone, when it cost $9,000 (in today’s dollars). It was a 2-pound “brick” with a 30-minute talk time.

Today’s smartphones are not only far cheaper; they are far more powerful than a room-size IBM mainframe from 30 years ago. That transformation arose from engineers inexorably shrinking the size and energy appetite of transistors and consequently increasing their number per chip roughly twofold every two years—the “Moore’s Law” trend, named for Intel co-founder Gordon Moore.

The compound effect of that kind of progress has indeed caused a revolution. During the past 60 years, Moore’s Law has seen the efficiency of how logic engines use energy improve by over a billionfold. But a similar transformation in how energy is produced or stored isn’t just unlikely; it can’t happen with the physics we know today.

In the world of people, cars, planes and large-scale industrial systems, increasing speed or carrying capacity causes hardware to expand, not shrink. The energy needed to move a ton of people, heat a ton of steel or silicon, or grow a ton of food is determined by properties of nature whose boundaries are set by laws of gravity, inertia, friction, mass and thermodynamics.

If combustion engines, for example, could achieve the kind of scaling efficiency that computers have since 1971—the year the first widely used integrated circuit was introduced by Intel—a car engine would generate a thousandfold more horsepower and shrink to the size of an ant. With such an engine, a car could actually fly, very fast.

If photovoltaics scaled by Moore’s Law, a single postage stamp-sized solar array would power the Empire State Building. If batteries scaled by Moore’s Law, a battery the size of a book, costing 3 cents, could power an A380 to Asia.

But only in the world of comic books does the physics of propulsion or energy production work like that. In our universe, power scales the other way.

An ant-size engine—which has been built—produces roughly 100,000 times less power than a Prius. An ant-size solar PV array (also feasible) produces a thousandfold less energy than an ant’s biological muscles. The energy equivalent of the aviation fuel actually used by an aircraft flying to Asia would take $60 million worth of Tesla-type batteries weighing five times more than that aircraft.

The challenge in storing and processing in formation using the smallest possible amount of energy is distinct from the challenge of producing energy, or of moving or reshaping physical objects. The two domains entail different laws of physics.

The world of logic is rooted in simply knowing and storing the fact of the binary state of a switch—i.e., whether it is on or off. Logic engines don’t produce physical action but are designed to manipulate the idea of the numbers zero and one. Unlike engines that carry people, logic engines can use software to do things such as compress information through clever mathematics and thus reduce energy use. No comparable compression options exist in the world of humans and hardware.

Of course, wind turbines, solar cells and batteries will continue to improve significantly in cost and performance; so will drilling rigs and combustion turbines. And, of course, Silicon Valley information technology will bring important, even dramatic, efficiency gains in the production and management of energy and physical goods. But the outcomes won’t be as miraculous as the invention of the integrated circuit, or the discovery of petroleum or nuclear fission.

Mark P. Mills is a senior fellow at the Manhattan Institute and a faculty fellow at North western University’s School of Engineering and Applied Science. He is also a strategic partner with Cottonwood Venture Partners, an energy tech venture fund. He holds a degree in physics from Queen’s University in Ontario, Canada.